> Introduction

In this work, we explore contrastive learning for few-shot classification, in which we propose to use it as an additional auxiliary training objective acting as a data-dependent regularizer to promote more general and transferable features. In particular, we present a novel attention-based spatial contrastive objective to learn locally discriminative and class-agnostic features. As a result, our approach overcomes some of the limitations of the cross-entropy loss, such as its excessive discrimination towards seen classes, which reduces the transferability of features to unseen classes. With extensive experiments, we show that the proposed method outperforms state-of-the-art approaches, confirming the importance of learning good and transferable embeddings for few-shot learning.

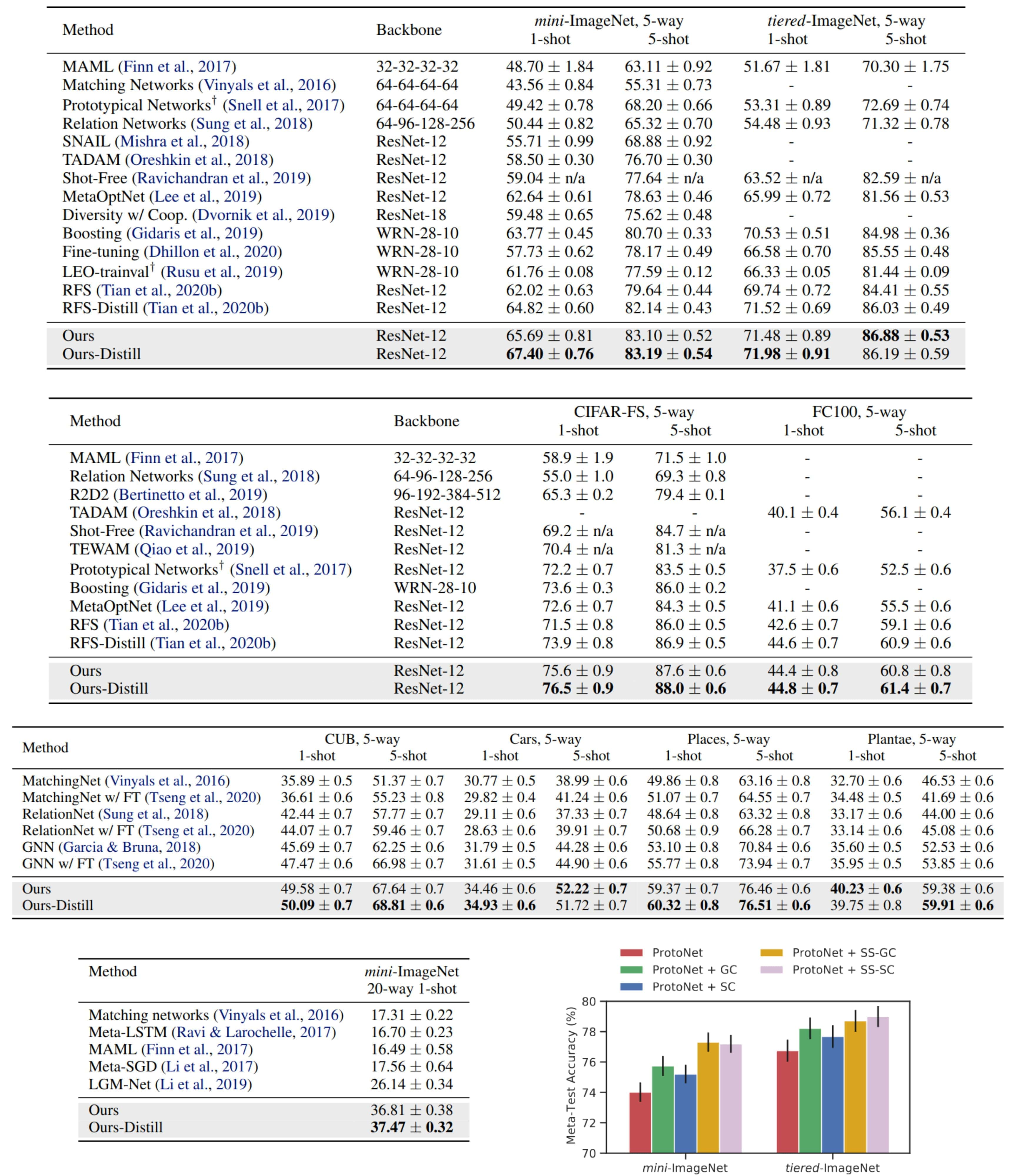

Figure: Overview of Spatial Contrastive Learning (SCL). To learn more locally class-independent discriminative features, we propose to measure the similarity between a given pair of samples using their spatial features as opposed to their global features. We first apply an attention-based alignment, aligning each input with respect to the other. Then, we measure the one-to-one spatial similarities and compute the Spatial Contrastive (SC) loss.

> Highlights

-

Contrastive Learning for Few-Shot Classification.

We explore contrastive learning as an auxiliary pre-training objective to learn more transferable features and facilitate the test time adaptation for few-shot classification. -

Spatial Contrastive Learning (SCL).

We propose a novel Spatial Contrastive (SC) loss that promotes the encoding of the relevant spatial information into the learned representations, and further promotes class-independent discriminative patterns. -

Contrastive Distillation for Few-Shot Classification.

We introduce a novel contrastive distillation objective to reduce the compactness of the features in the embedding space and provide additional refinement of the representations.

> Overview

In this work, we consider the simple transfer learning baseline for few-shot classification, in which the model is first pre-trained using the standard cross-entropy (CE) loss on the meta-training set. Then, at test time, a linear classifier is trained on the meta-testing set on top of the pre-trained model. The pre-trained model can either be fine-tuned together with the classifier, or fixed and used as a feature extractor. While promising, we argue that using the CE loss during the pre-training stage hinders the quality of the learned representations since the model only acquires the necessary knowledge to solve the classification task over seen classes at train time. As a result,the learned visual features are excessively discriminative against the training classes, rendering them sub-optimal for test time classification tasks constructed from an arbitrary set of unseen and novel classes (see figure bellow).

Figure: Analysis of the Learned Representations. (a) k-Nearest Neighbors Analysis. For a given test image from mini-ImageNet dataset, we compute the nearest neighbors in the embedding space on the test set, and we observe that they are semantically dissimilar. This suggests that the learned embeddings are excessively discriminative towards features used to solve the training classification tasks, which are not useful to recognize the novel classes at test time. (b) GradCAM results. We see that the dominant discriminative features are not the ones useful for test-time classification.

To alleviate these limitations, we propose to leverage contrastive representation learning as an auxiliary objective, where instead of only mapping the inputs to fixed targets, we also optimize the features, pulling together semantically similar (i.e., positive) samples in the embedding space while pushing apart dissimilar (i.e., negative) samples. By integrating the contrastive loss into the learning objective, we give rise to discriminative representations between dissimilar instances while maintaining an invariance towards visual similarities. Subsequently, the learned representations are more transferable and capture more prevalent patterns out-side of the seen classes.

Specifically, we propose a novel attention-based spatial contrastive loss as the auxiliary objective to further promote class-agnostic visual features and avoid suppressing local discriminative patterns. It consists of measuring the local similarity between the spatial features of a given pair of samples after an attention-based spatial alignment mechanism, instead of the global features (i.e., avg. pooled spatial features) used in the standard contrastive loss (See figure bellow). We also adopt the supervised formulation of the contrastive loss to leverage the provided label information when constructing the positive and negative samples

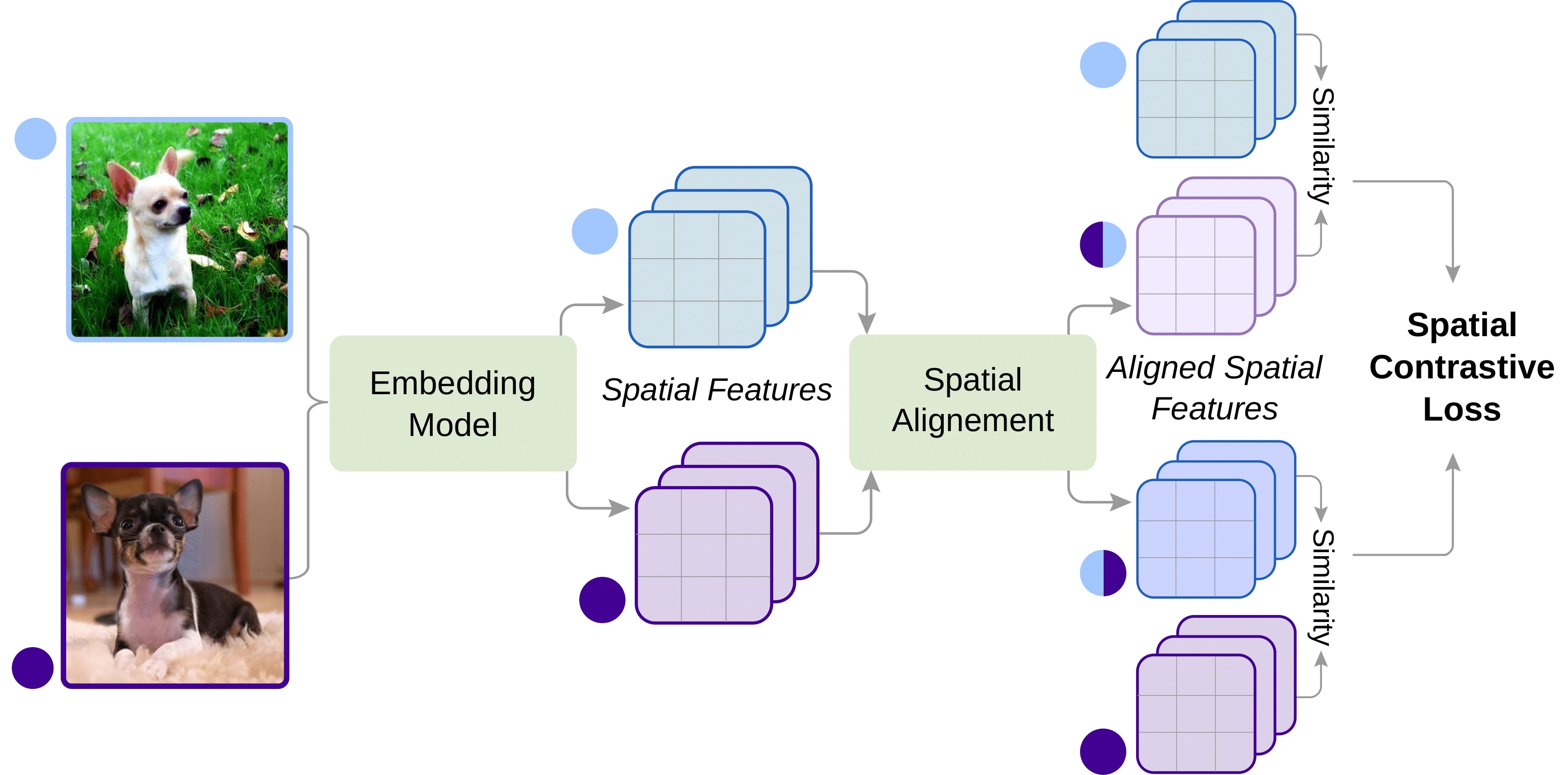

Figure: Attention-based Spatial Alignment. To compute the spatial similarity between a pair of features (purple and blue), we first spatially align the first features (purple) with respect to the second (blue) features with the attention mechanism. Then we can compare the aligned value of the first features with the value of the second features. Note that the same process is applied in reverse to compute the final spatial similarity.

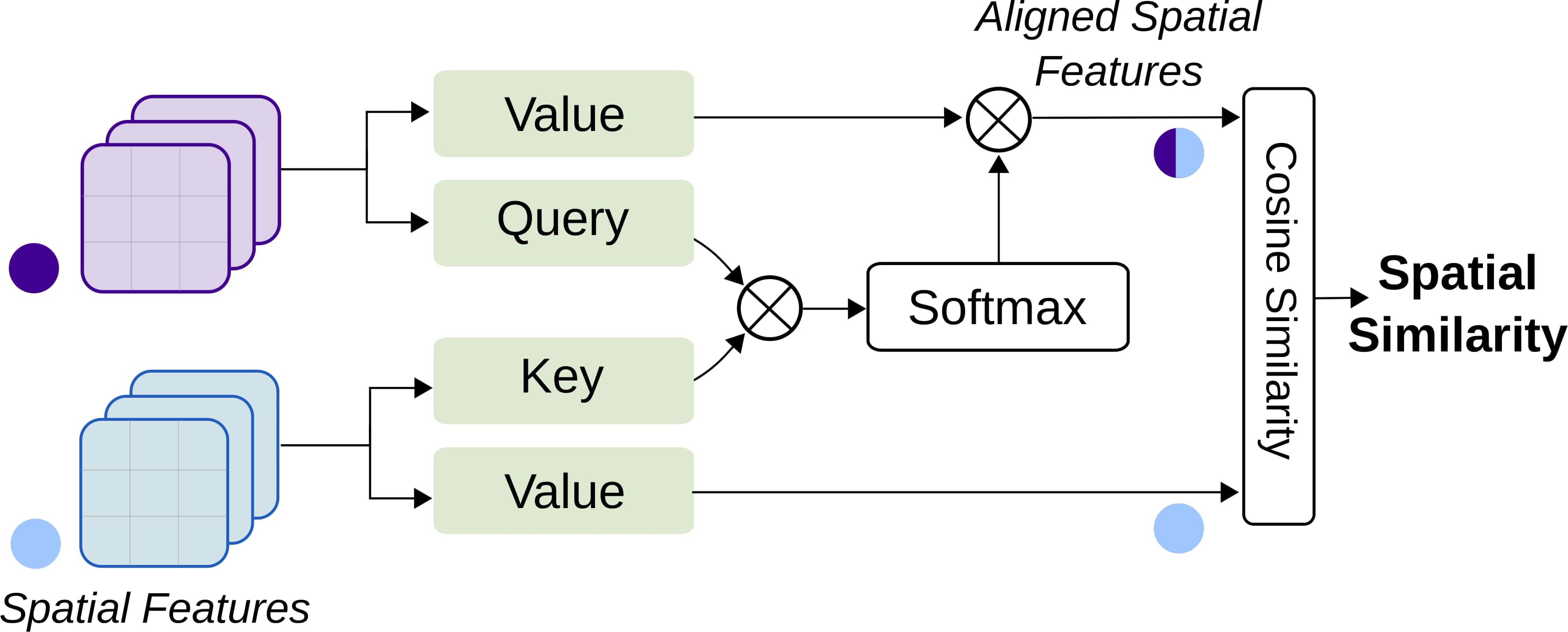

> Results